news

[Amazon Fulfillment Technologies and Robotics (FTR)], job offer

Would you like to work on state-of-the-art computer vision models that see and process millions of images every day, affecting millions of customers? Our science team at Amazon Fulfillment Technologies and Robotics in Berlin (Germany) is hiring excellent scientists to work on our visual item understanding and defect detection solutions.

Point of contact: Sebastian Höfer (hoefersh@amazon.de)

>Apply now

Punching Cards podcast from Science of Intelligence

What makes a person, a cat, a bonobo, a dolphin, an octopus, a cockatoo intelligent? What is it that these living organisms have or do, when we say that they are intelligent? And what about things, computer programmes, robots? Can they be intelligent? If so, how? If not, why not?

[rokit],"Investigation on human interaction with robots in public spaces" by rokit consortium

In our research, we develop interdisciplinary design approaches and test and inspection procedures to meet ethical, social, and legal requirements, to ultimately enable the use of robots in public.

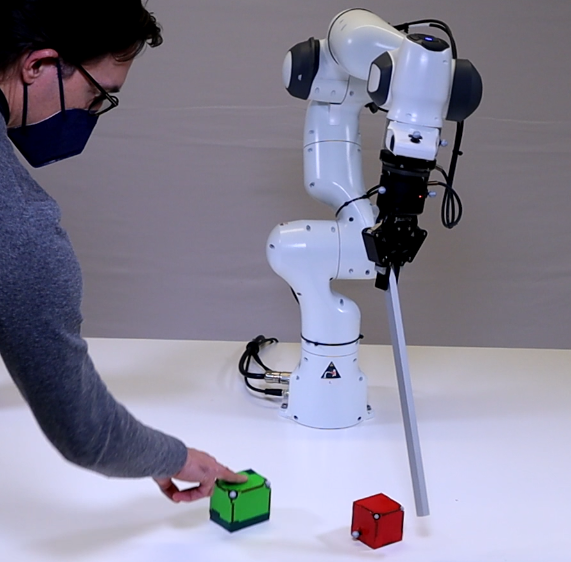

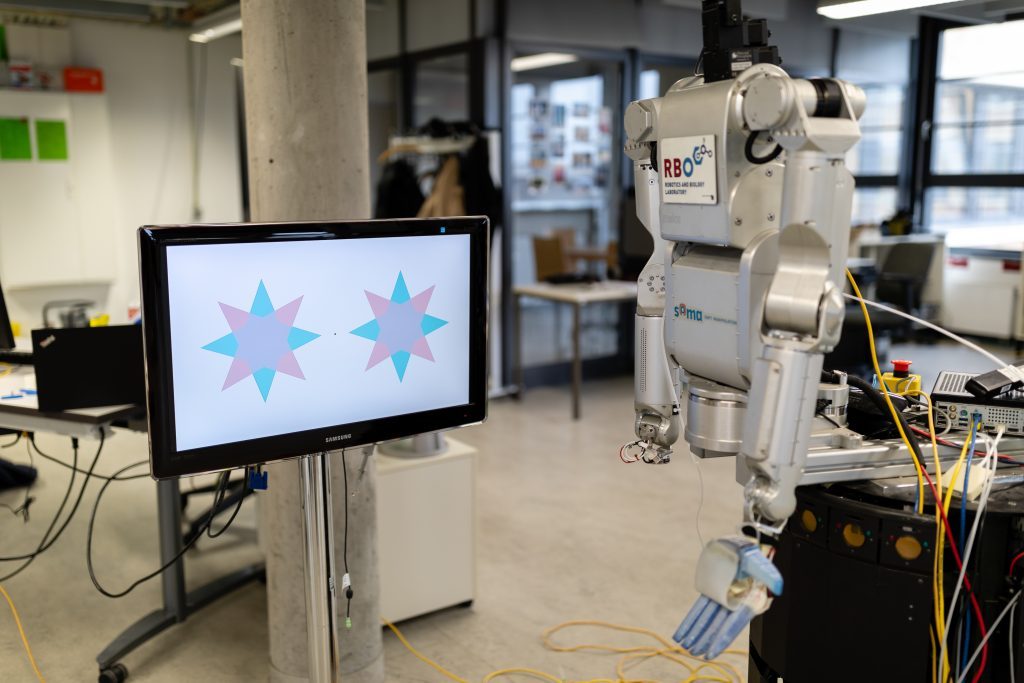

[RBO Lab, TU Berlin],"One Object at a Time: Accurate and Robust Structure From Motion for Robots" by Aravind Battaje

A robot fixating like a human perceives distance to the fixated object and relative positions of surrounding objects immediately, accurately, and robustly.

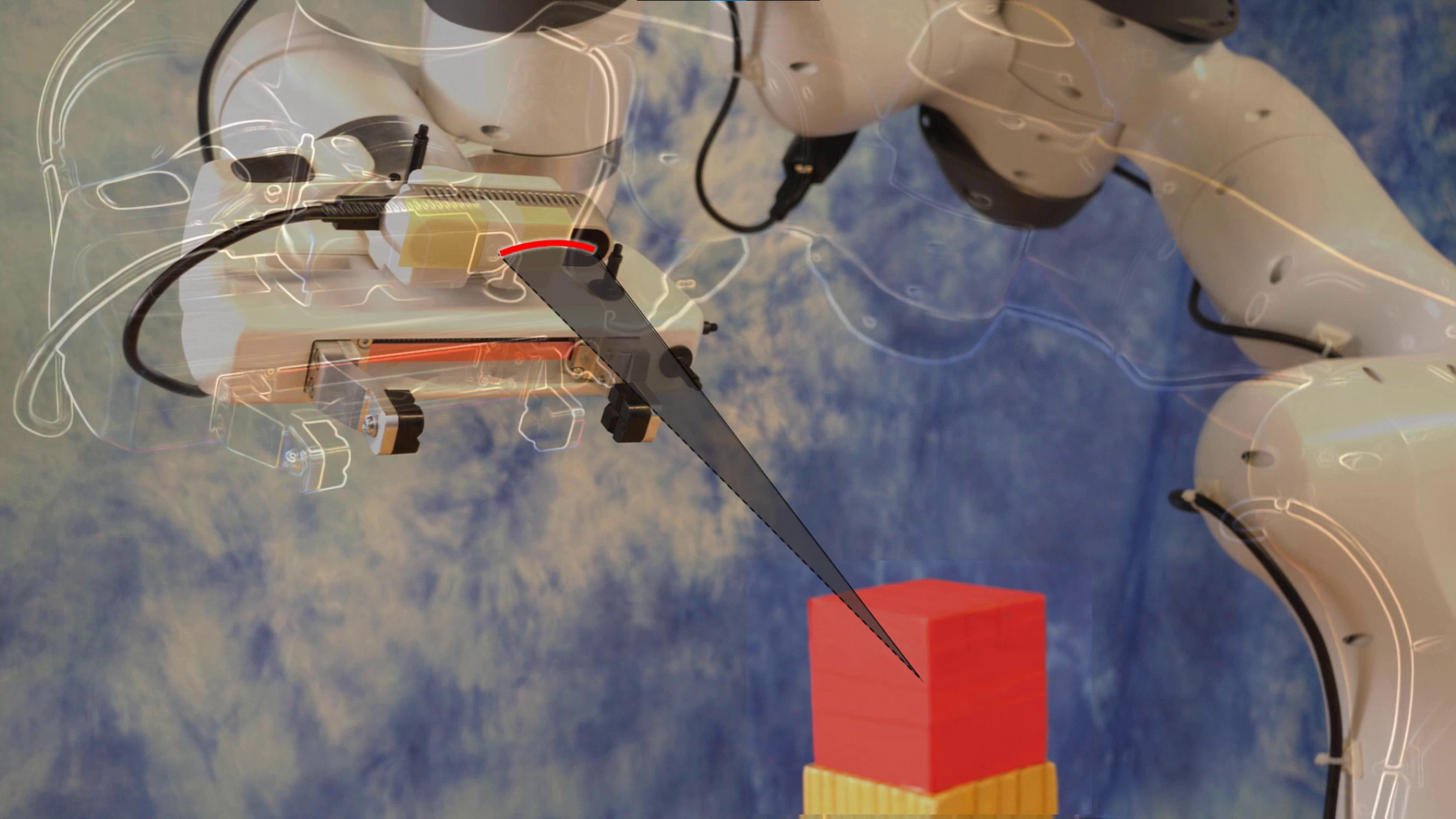

[LIS Lab, TU Berlin] "Sequence-of-Constraints MPC: Reactive Timing-Optimal Control of Sequential Manipulation" by Marc Toussaint , Jason Harris, Jung-Su Ha, Danny Driess, Wolfgang Hönig.

With this research we want to build on our previous methods for physical reasoning, but bring them towards highly reactive robotic manipulation, robust to an experimenter interfering.

[RBO Lab, TU Berlin] "Active Acoustic Contact Sensing for Soft Pneumatic Actuators" by Gabriel Zöller, Vincent Wall, Oliver Brock.

We present an active acoustic sensor that turns soft pneumatic actuators into contact sensors.

>More details: Paper and video material

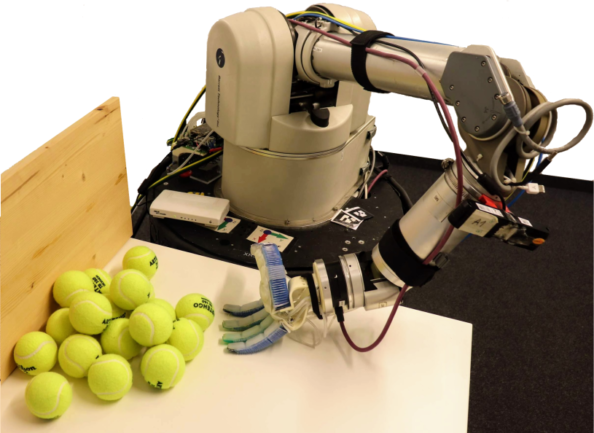

[RBO Lab, TU Berlin] "Analysis of Open-Loop Grasping From Piles" by Előd Páll and Oliver Brock

We identified a regularity in objects’ motion when pushed, namely, an object separates and stabilizes in front of the pusher. We devise an open-loop grasping strategy leveraging this regularity in piles of nearly identical objects.

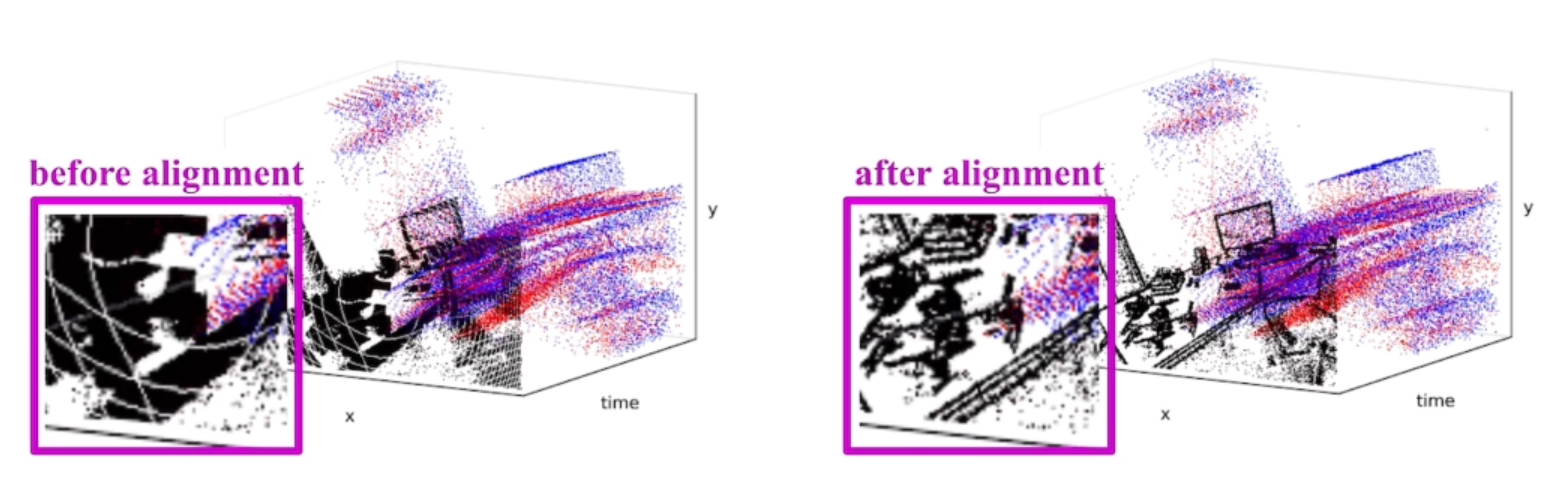

[RBO Lab, TU Berlin] "The Spatio-Temporal Poisson Point Process: A Simple Model for the Alignment of Event Camera Data" by Cheng Gu, Erik Learned-Miller, Daniel Sheldon, Guillermo Gallego, Pia Bideau

In this work we propose a new model of event data that captures its natural spatio-temporal structure.

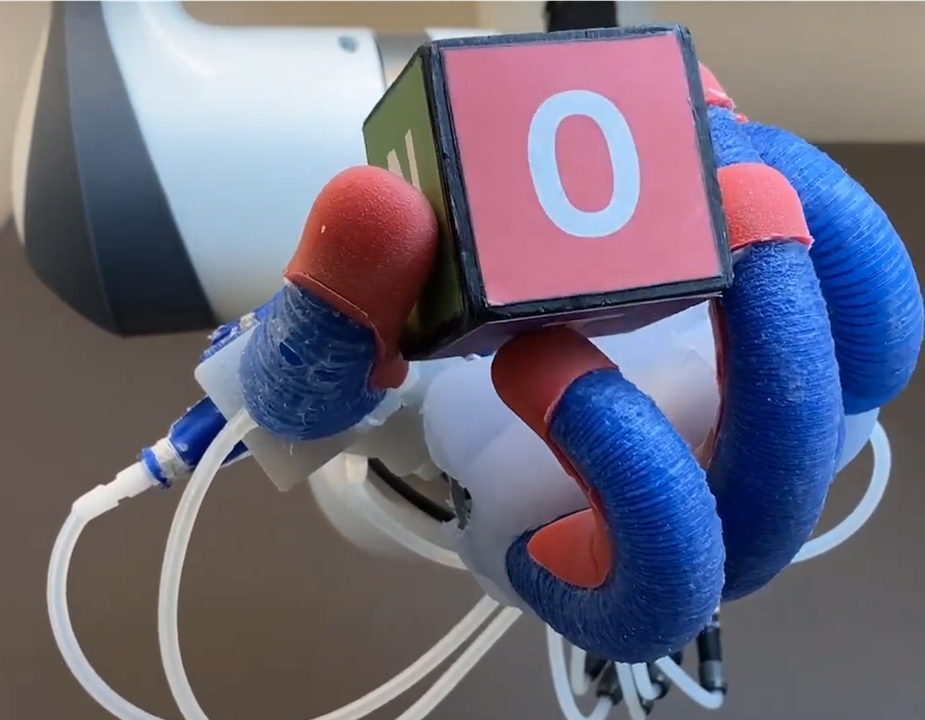

[RBO Lab, TU Berlin] "In-Hand Manipulation" by Adrian Sieler, Aditya Bhatt

Unlike most robot grippers, human hands are versatile manipulators: they are soft, compliant, dexterous, and can be used for multiple tasks at once. We want robotic hands to be similarly versatile.

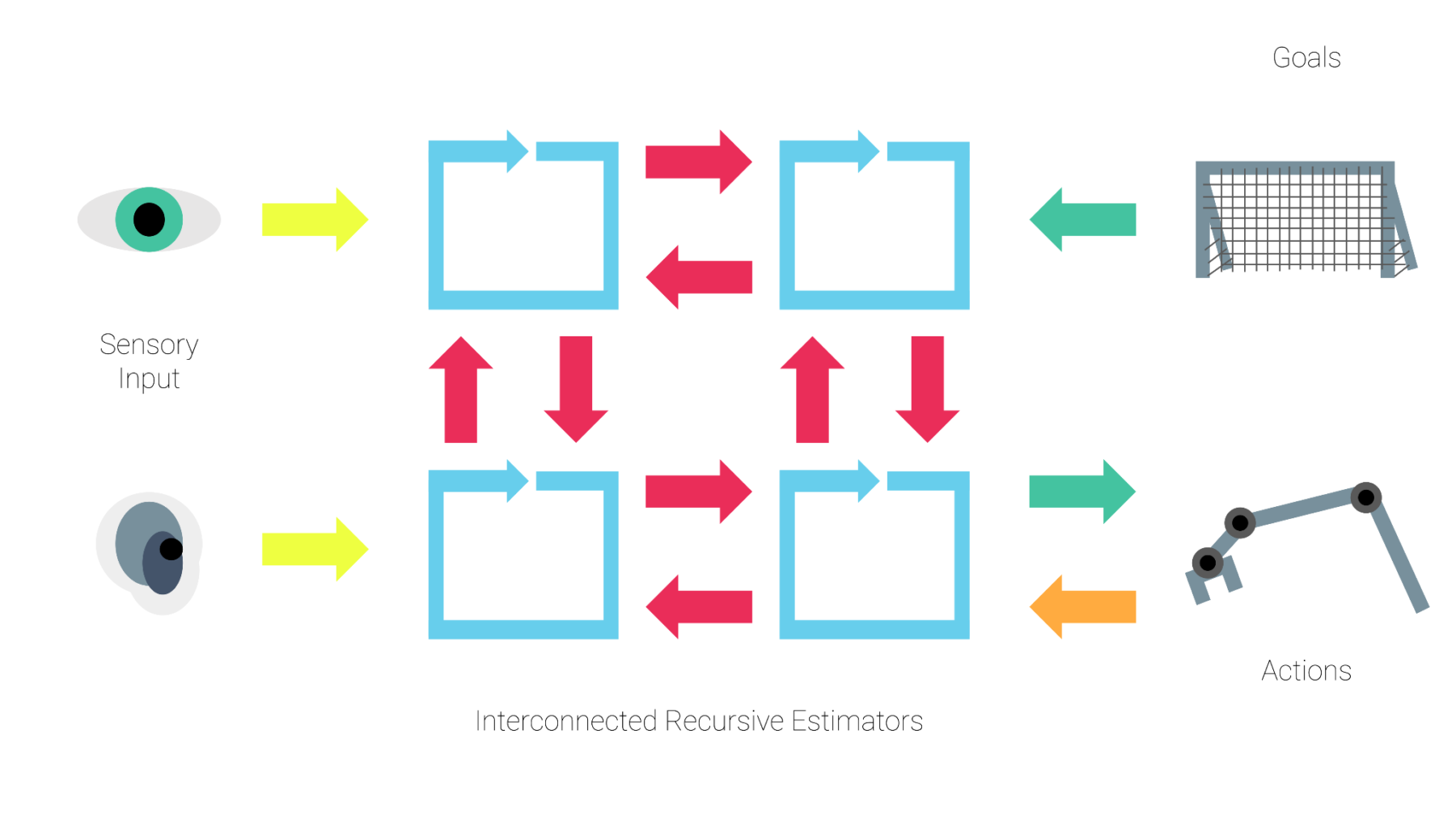

[RBO Lab, TU Berlin] "A Promising Principle of Intelligence from Robotics" by Vito Mengers

Drawing from previous robotics research, we propose a computational principle to perform the mapping from sensory inputs to suitable actions by extracting the task relevant information from the sensory input and generating suitable actions in an integrated manner.

[RBO Lab, TU Berlin] "A study on human and robot perception and the architecture of perceptual information processing" by Aravind Battaje

This research project investigates human and robot perception and aims to develop a constructive understanding of perceptual information processing.

>More details: Paper and video material

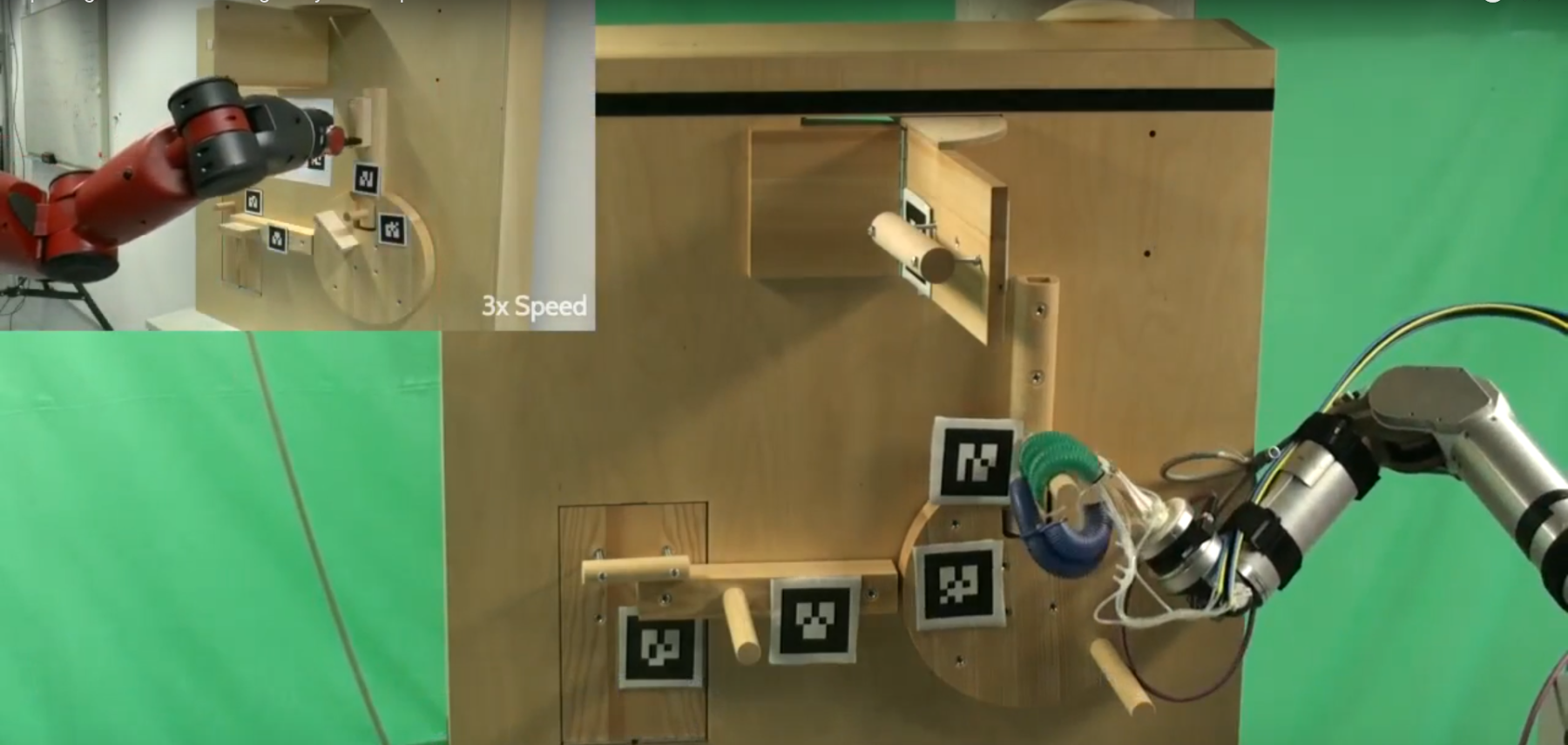

[RBO Lab, TU Berlin] "Rational Selection of Exploration Strategies in an Escape Room Task" by Oussama Zenkri

In our research, we aim to understand the multi-strategy model and assess its contribution to increasing flexibility and adaptability in robotic problem-solving.

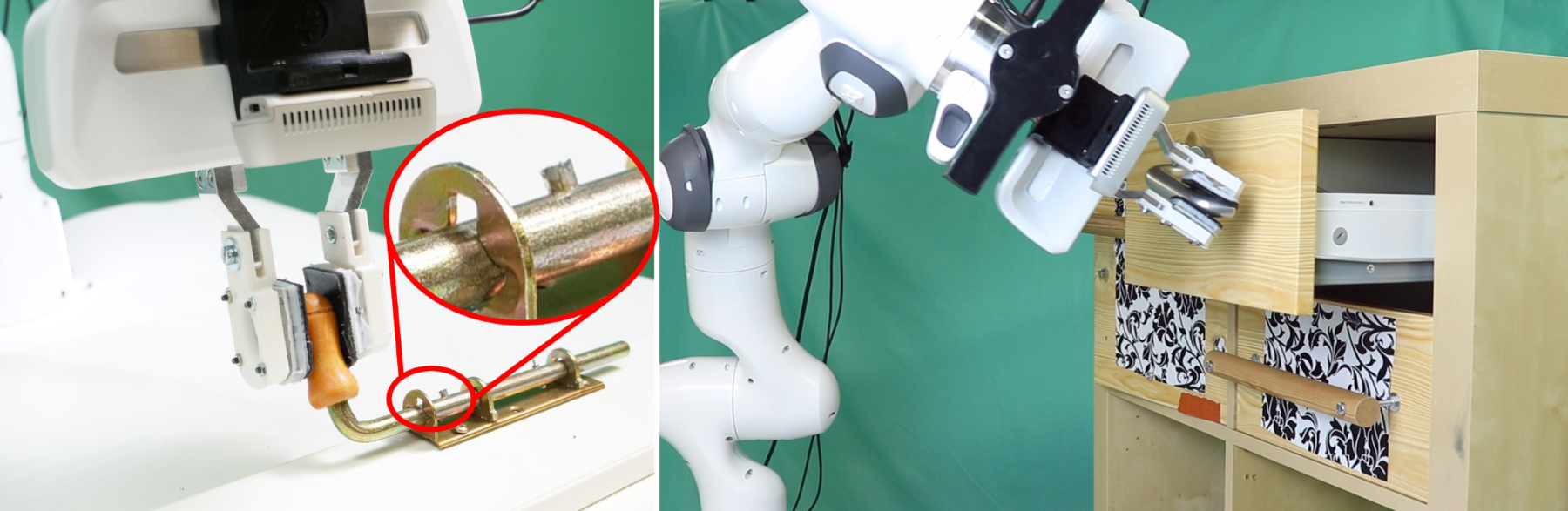

[RBO Lab, TU Berlin] "Learning Task-Directed Interactive Perception Skills" by Manuel Baum

Leveraging interactive perception we try to design robots that explore their environment actively, in a way that reminds of how a baby explores a new toy.

>More details: Paper and video material

[RBO Lab, TU Berlin] "Learning from Demonstration by Exploiting Environmental Constraints" by Xing Li

In this project, we will develop a system that allows a robot to acquire new skills by imitating a user solving an escape room task.